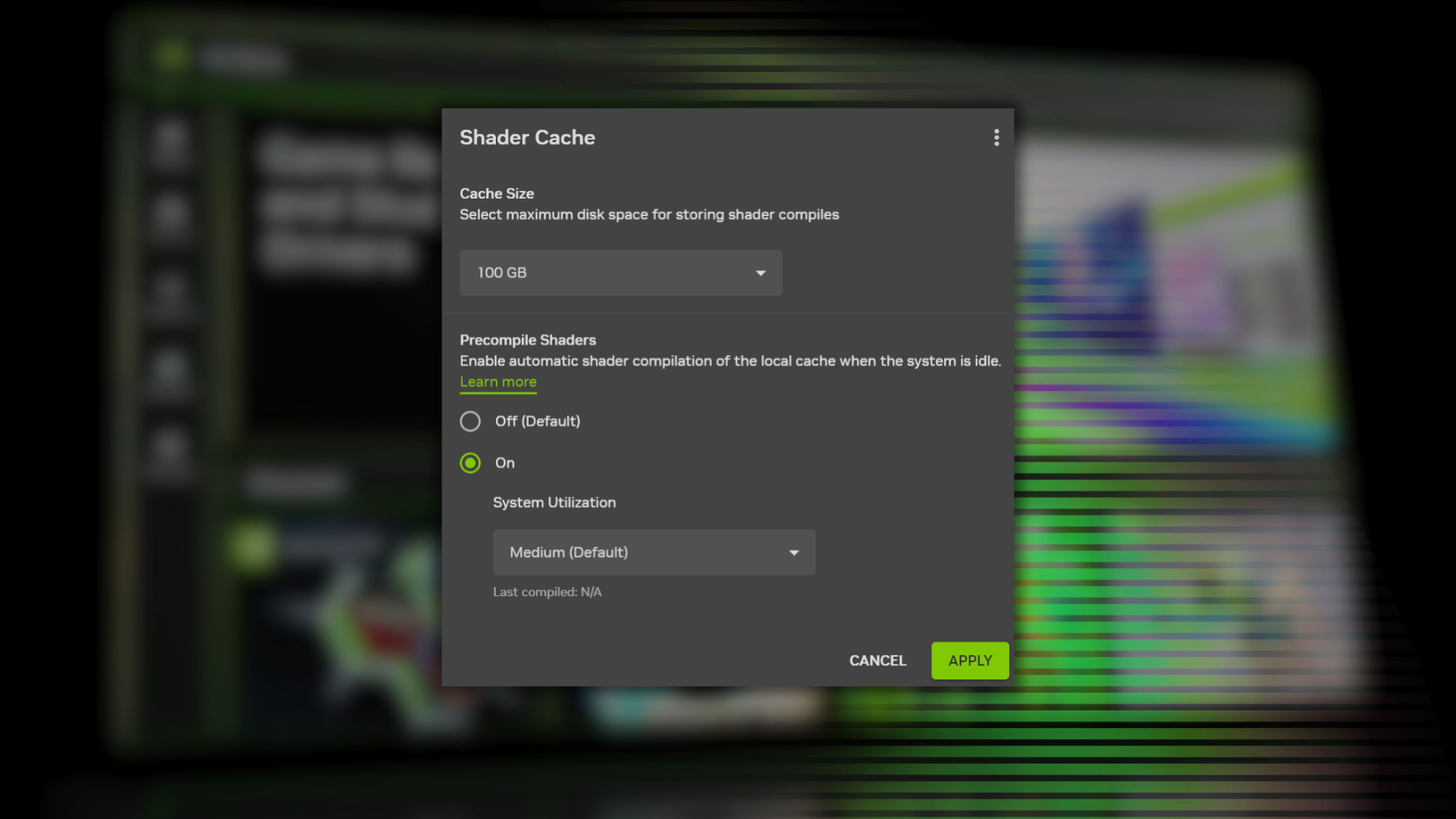

Nvidia introduced a beta Auto Shader Compilation (ASC) feature in the Nvidia App that automatically recompiles game shaders in the background after GPU driver updates to reduce runtime shader compilation wait times. The feature is disabled by default and configurable under Global Settings > Shader Cache, with cache sizing and resource limits (example: a 100 GB cache can store shaders for ~20 modern AAA titles) and low/medium/high system utilization options. ASC only handles recompilation after an initial local compile and signals Nvidia moving toward industry-level precompiled delivery (Microsoft ASD, Intel precompiled initiatives), which could further reduce local shader work over time.

This is less about incremental UX and more about platform capture: software that reduces friction around game startup meaningfully raises the effective value of the GPU beyond raw FLOPS, widening an install-base moat that hardware alone can't buy. Over 12–24 months, that moat compounds – game developers and distributors prefer the GPU ecosystem that minimizes player churn and support tickets, which in turn increases OEM and cloud partnership leverage for the vendor that owns the best tooling. Second-order winners include cloud/infra providers and CDN partners if distribution of prebuilt assets becomes standard; conversely, silicon vendors who can’t match the software stack risk becoming “commoditized” suppliers selling only hardware. On the margin, SSD capacity and I/O bandwidth on consumer boxes will be a recurring customer touchpoint (cache sizing, eviction policies), creating small but persistent demand effects for consumer NVMe upgrades and potential monetization opportunities (tiered cache settings, paid profiles). Tail risks are technical regressions, security/anti-cheat conflicts, and consumer pushback over background resource use or storage bloat — each could slow adoption from months to quarters. A faster catalyst path would be MSFT/DirectX-level integrations or major publishers shipping precompiled assets, which would materially accelerate adoption within 3–9 months; absent that, expect a gradual 12–24 month rollout across the installed base. From a competitive angle, the market underestimates how quickly software conveniences translate into pricing power for expansion (e.g., cloud gaming, Game Ready services). But don’t overstate short-term unit demand uplift: this is stickiness, not a direct GPU replacement cycle driver, so valuation moves should be sized accordingly.

AI-powered research, real-time alerts, and portfolio analytics for institutional investors.

Request a DemoOverall Sentiment

mildly positive

Sentiment Score

0.25

Ticker Sentiment