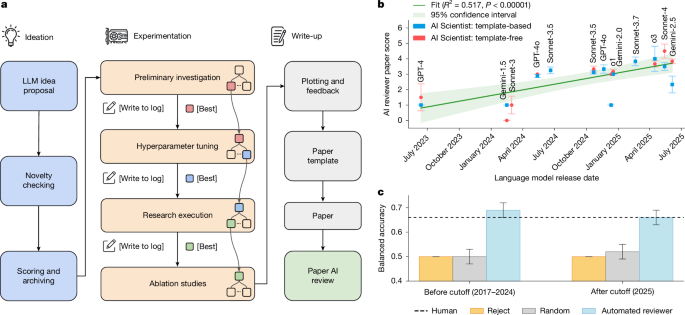

The AI Scientist automates the full research lifecycle (idea generation, code, experiments, manuscript writing and peer review) and produced three manuscripts submitted to an ICLR workshop; one paper received an average reviewer score of 6.33 (6, 7, 6) that exceeded the workshop acceptance threshold (workshop acceptance rate ~70%) before being withdrawn per protocol. The authors also built an Automated Reviewer that attained ~69% balanced decision accuracy (dropping to ~66% on post-cutoff data), comparable to human reviewers, and report that paper quality rises with stronger base LLMs and greater inference-time compute. Key risks noted include hallucinations, implementation errors, potential to overload peer review, and broader ethical/regulatory concerns; all AI-generated submissions were withdrawn under an IRB-approved process.

The immediate winners are firms that supply cheap, scalable compute, end-to-end ML tooling and model ops — GPUs, cloud IaaS, and data platform vendors will capture recurring demand as autonomous pipelines shift compute from ad-hoc research bursts to continuous programmatic experimentation. That demand is sticky: once labs and enterprises routinize automated experiments, utilization profiles move from seasonal to baseline-plus-spikes, favouring providers with elastic capacity and committed-reservation offerings (translation: stronger gross retention and higher take-rate on enterprise contracts over 6–24 months). Key tail risks are regulatory and reputational. Expect a 6–36 month window where journals, funders and governments introduce provenance, disclosure and safety controls (digital watermarks, attestations, or audit trails) that raise integration and compliance costs for toolchains — this could temporarily slow enterprise adoption and favour incumbents who can absorb compliance spend. Conversely, model-capability and compute-cost curves suggest adoption velocity: capabilities double task-length ~every 7 months and inference cost declines in parallel, meaning commercial uptake could accelerate nonlinearly over 12–36 months if no major policy shocks intervene. Second-order effects create new markets and margin pools: automated reviewers and VLM-based figure checkers become indispensable, spawning SaaS middleware that commands subscription margins (SMB to enterprise). At the same time, commoditization of low-skill research work (basic experiments, paper scaffolding) creates downward pressure on junior researcher labor supply and may structurally reprice research consulting and academic publishing services over 2–5 years, shifting value to platforms that curate, certify and monetize provenance and datasets.

AI-powered research, real-time alerts, and portfolio analytics for institutional investors.

Request a DemoOverall Sentiment

mildly positive

Sentiment Score

0.30